In this tutorial, we will learn how to scrap web using selenium and beautiful soup. I am going to use these tools to collect recipes from a food website and store them in a structured format in a database. The two tasks involved in collecting the recipes are:

- Get all the recipe urls from the website using selenium

- Convert the html information of a recipe webpage into a structed json using beautiful soup.

For our task, I picked the NDTV food as a source for extracting recipes.

Selenium

Selenim Webdriver automates web browsers. The important use case of it is for autmating web applications for the testing purposes. It can also be used for web scraping. In our case, I used it for extracting all the urls corresponding to the recipes.

Installation

Web Scraping Python Beautifulsoup Github Example

Python Web Scraping using BeautifulSoup AttributeError: 'NoneType' object has no attribute 'text' 1 Issue with AttributeError: 'WebDriver' object has no attribute 'manage'. Aug 13, 2018 Make http requests in python via requests library. Use chrome dev tools to see where data is on a page. Scrape data from downloaded pages when data is not available in structured form using BeautifulSoup library. Parse data like tables into python 2D array. Scraping function to get data in form of a dictionary (key-val pairs).

I used selenium python bindings for using selenium web dirver. Through this python API, we can access all the functionalities of selenium web dirvers like Firefox, IE, Chrome, etc. We can use the following command for installing the selenium python API.

Selenium python API requires a web driver to interface with your choosen browser. The corresponding web drivers can be downloaded from the following links. And also make sure it is in your PATH, e.g. /usr/bin or /usr/local/bin. For more information regarding installation, please refer to the link.

| Web browser | Web driver link |

|---|---|

| Chrome | chromedriver |

| Firefox | geckodriver |

| Safari | safaridriver |

I used chromedriver to automate the google chrome web browser. The following block of code opens the website in seperate window.

Traversing the Sitemap of website

The website that we want to scrape looks like this:

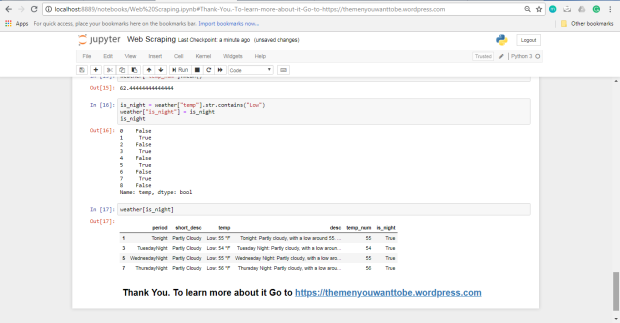

We need to collect all the group of the recipes like categories, cusine, festivals, occasion, member recipes, chefs, restaurant as shown in the above image. To do this, we will select the tab element and extract the text in it. We can find the id of the the tab and its attributes by inspect the source.In our case, id is insidetab. We can extract the tab contents and their hyper links using the following lines.

Web Scraping Python Beautifulsoup Github Free

We need to follow each of these collected links and construct a link hierachy for the second level.

When you load the leaf of the above sub_category_links dictionary, you will encounter the following pages with ‘Show More’ button as shown in the below image. Selenium shines at tasks like this where we can actually click the button using element.click() method.

For the click automation, we will use the below block of code.

Now let’s get all the recipes in NDTV!

Web Scraping With Beautifulsoup

Beautiful Soup

Web Scraping With Python Pdf

Now that we extracted all the recipe URLs, the next task is to open these URLs and parse HTML to extract relevant information. We will use Requests python library to open the urls and excellent Beautiful Soup library to parse the opened html.

Here’s how an example recipe page looks like:

soup is the root of the parsed tree of our html page which will allow us to navigate and search elements in the tree. Let’s get the div containing the recipe and restrict our further search to this subtree.

Web Scraping Python Beautifulsoup Github Code

Inspect the source page and get the class name for recipe container. In our case the recipe container class name is recp-det-cont.

Let’s start by extracting the name of the dish. get_text() extracts all the text inside the subtree.

Now let’s extract the source of the image of the dish. Inspect element reveals that img wrapped in picture inside a div of class art_imgwrap.

Web Scraping Python Beautifulsoup Github Download

BeautifulSoup allows us to navigate the tree as desired.

Finally, ingredients and instructions are li elements contained in div of classes ingredients and method respectively. While find gets first element matching the query, find_all returns list of all matched elements.

Beautifulsoup Tutorial

Overall, this project allowed me to extract 2031 recipes each with json which looks like this: